In my daily work, I am responsible for coaching and training of colleagues and co-workers with less experience. One of the primary issues I have to deal with is code quality. Of course, a developer will never deliberately produce bad code. So in order to improve quality, the developers have to gain quality awareness.

In this article I’ll to shed some light on some code quality measurements and a way to improve quality awareness in your development team.

I am not going to write some philosophical essay about how to interpret the words “code quality”, just because I don’t like writing that, and you probably wouldn’t like to read it. However, I do want to sum up some common tools and measurements that help with measuring it.

There are several tools available that measure the structural sanity of blocks of code (typically methods). Check style and PMD are examples of those tools. They typically raise errors on the level of javadocs, unused variables and parameters, unused private methods, unused imports, excessive use of if/for constructs, and so forth. Although these metrics do not necessarily mean that a class is qualitatively bad, a high number of markers in a set of file do give a air indication that something is fundamentally wrong. These tools won’t tell you what though.

Another very important but often overrated metric is test coverage. It is usually measured as a single or combination of percentages. 100% means that all lines of code and all possibilities of if (true and false) constructs have been touched during unit tests. 0% means that not a single line of code is tested. All though a low percentage is something to really worry about, a high percentage is in no way a means to sit back and relax. It’s easy to make tests pass with 100% code coverage; just leave out the “assert” statements. You’re probably thinking “duh” at the moment, but I’ve seen it happen more than once.

The most effective and certain method, however, is the code review. However, it is also very time consuming. It requires that one or more developers restrain from being (in the eyes of the customer) productive and give their attention to someone else’s code. Provided that the developer has a high standard of quality, it will definitely improve total quality of the code. Of course, there is the concept of pair programming, which could be seen as a form of continuous code reviewing. Highly effective, and can improve the skills of both developers.

These tools and processes can be very useful, but their overall efficiency depends on one thing: quality awareness within your development team. I’ll explain what I mean with “quality awareness”. It is the ability of developers to recognize code smells or failure to use design patterns at locations where they would typically be applied. This awareness it typically raises as developers gain more experience, but we’d typically like to help fate a little hand.

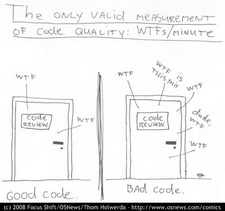

Thom Holwerda came up with a very nice cartoon on the OSNews website about “the only valid measurement of code quality: WTF’s/minute”. Of course it is a very nice cartoon, but it actually does exactly hit the bull’s eye of quality measurement. In fact, at my current project, developers have almost abandoned the words “code smell” for the “WTF!”.

Thom Holwerda came up with a very nice cartoon on the OSNews website about “the only valid measurement of code quality: WTF’s/minute”. Of course it is a very nice cartoon, but it actually does exactly hit the bull’s eye of quality measurement. In fact, at my current project, developers have almost abandoned the words “code smell” for the “WTF!”.

In my role as a development coach this triggered two things: how do I get all developers to effectively produce more “WTF’s” and what should developers do with them to get code quality up. In these terms, good quality code would mean that a high “WTF” producing developer isn’t able to produce many “WTF’s” of a specific piece of code.

A WTF is typically raised when a developer more or less accidentally opens a class file and sees something that just doesn’t seem right. It doesn’t have to be a formal review.

Getting code quality up after a WTF has been raised is easy. Just let the developer who found the WTF fix the code in such a way, that the WTF doesn’t apply to that code any more. However, the developer causing the bad code will not know, and continue with his habits.

It is very important to report the WTF to the developer who produced the code. Subversions “annotate” or “blame” function provides a means to blame someone for the existence of a specific piece of code. The best way to report this back is through an informative and educational discussion, where everyone could be involved. The factoring should preferably be done by the developer responsible for the code, perhaps with the assistance of the developer who reviewed it. As a result, quality awareness will have improved within the development team.

When working with an off-shore team, or any other form of collaboration where developers do not share the same location, these discussions are somewhat difficult to hold. In such a case, use of a tool such as Mylin or Jira could help with the communication. Disadvantage is that only few people are really involved in the discussion.

Dealing with the “WTF’s” according to this process has really helped in my current project, and I am sure it can have a positive effect anywhere, as long as it is applied correctly and respectfully among the entire development team.